The control center

for AI localization

Stop wasting tokens and losing context. Connect your preferred models: Gemini, Claude, OpenAI, or any model via OpenRouter to your codebase and let SimpleLocalize feed the AI everything it needs to translate accurately.

We power your product localization

From first commit to global launch

Quality Control

The context engine

Raw AI often fails because it doesn't know if “Home” is a house or a navigation button. SimpleLocalize solves this by feeding every translation request rich metadata that eliminates ambiguity before the model even starts.

The result: translations that are accurate on the first pass, fewer review cycles, and context that actually works, not copy-pasted instructions you have to rewrite every time.

What SimpleLocalize sends to the AI

Every translation request is enriched with structured metadata. No manual prompt engineering required.

Project context

Define what your product is, who it's for, and how it should sound. Set it once at the project level and every AI request inherits it automatically.

Key-level descriptions

Add descriptions to individual keys so the AI knows exactly what each string means. No more ambiguous translations or misunderstandings.

Constraint awareness

Hard character limits, placeholder preservation, and HTML tag rules are enforced automatically. No more layout-breaking translations.

Tone & formality instructions

Specify formal vs. informal tone, regional preferences, or industry-specific jargon per language or project. The AI adapts its output accordingly.

Cost Efficiency

Smart caching:

only translate what changed

Dumping your entire JSON file into ChatGPT and re-translating everything is expensive and risky. Previously correct translations can "drift" between runs, introducing subtle inconsistencies that are hard to catch.

SimpleLocalize acts as a translation memory and AI cache. When you update your keys, the system identifies the delta, only the new or changed strings, and sends just those to the AI. Everything else stays locked and consistent, you save money on tokens and prevent hallucination drift in previously approved translations.

Model Agnostic

Your workflow, your model

Whether it's the speed of Gemini, the reasoning of Claude, or the ubiquity of OpenAI, swap models without changing your workflow. Use custom prompts and provider-specific settings too.

Google Gemini

Optimized for speed and cost. Ideal for bulk-translating large batches of UI strings where throughput matters more than nuance.

Anthropic Claude

Excels at creative and nuanced copy. Use it for marketing pages, onboarding flows, and content where tone and cultural fit are critical.

OpenAI

The general-purpose workhorse. Strong across languages with broad coverage and reliable output for everyday localization tasks.

OpenRouter

Swap models per task or per project via OpenRouter. Use fast models for bulk jobs and premium models for high-stakes copy, no lock-in.

Plus DeepL and Google Translate for traditional machine translation. Use them as a cost-effective baseline, then refine with AI where context matters.

Enterprise Privacy

Local AI for teams that

can't use the cloud

Some teams can't send translation data to external APIs due to regulatory requirements, NDA-protected content, or internal policy. SimpleLocalize supports local LLMs via Ollama or any OpenAI-compatible endpoint, so your data never leaves your infrastructure.

Air-gapped translation

Run models like Llama, Mistral, or Gemma locally. Translations are generated on your own hardware with zero external network calls.

Bring your own API key

Connect your own OpenAI, OpenRouter, or custom endpoint. You control your own usage, billing, and rate limits directly with the provider.

Enterprise-ready

SOC 2 readiness, SSO, and role-based access. AI localization without compromising your security posture.

Side by side

Manual AI copy-paste vs.

SimpleLocalize AI

Pasting strings into ChatGPT works for a demo. It doesn't work for a product with 50 languages and thousands of keys.

The Technical Edge

MCP: Let AI agents

translate your app

SimpleLocalize ships an official MCP (Model Context Protocol) server that lets AI agents, in Cursor, Windsurf, Claude Desktop, or any MCP-compatible client, interact directly with your localization data.

Your AI coding assistant can query missing translations, update keys, and trigger auto-translation without you ever leaving the IDE. This is the bridge between AI-driven dev cycles and production-ready localization.

Agent queries the MCP server for untranslated keys in a given language.

Push new or changed translation keys directly from your code editor via the agent.

The agent triggers AI translation with full project context, right from your workflow.

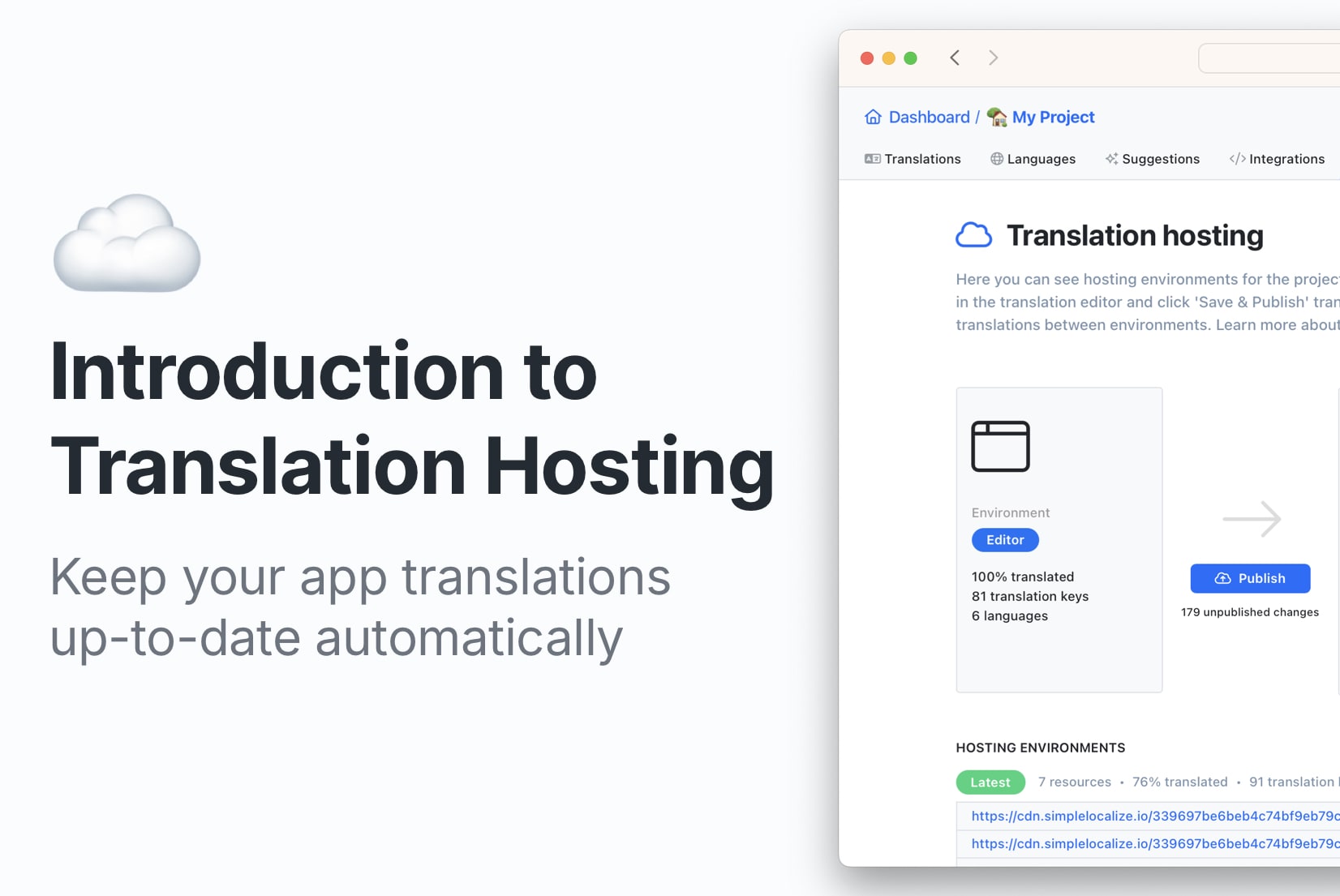

Built-in Editor

AI as your copilot, not a black box

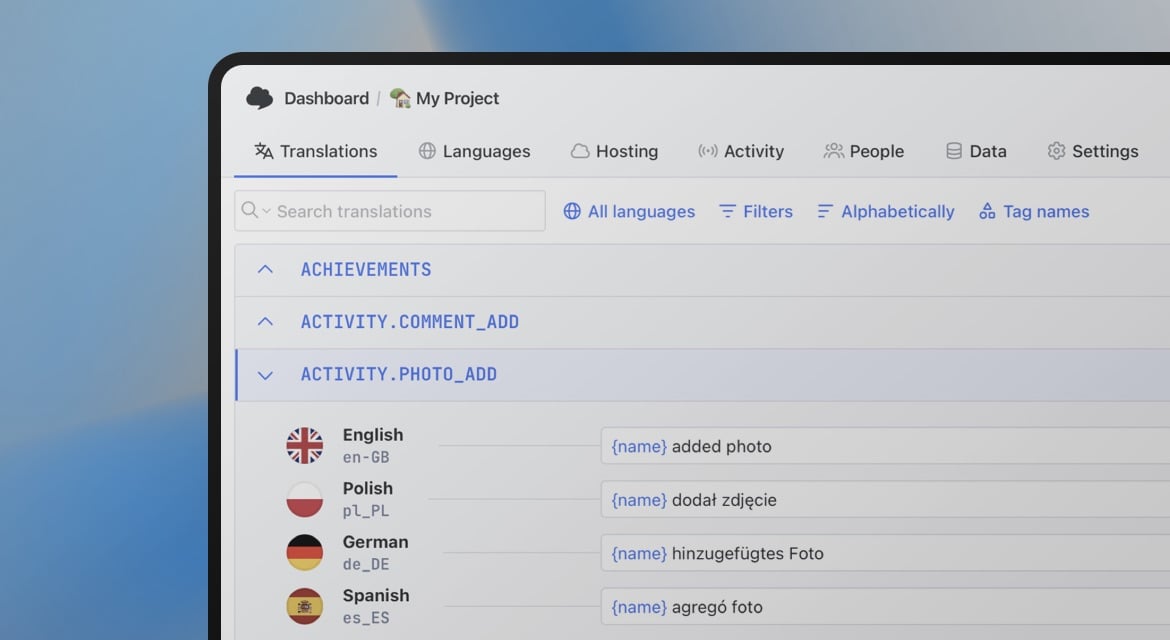

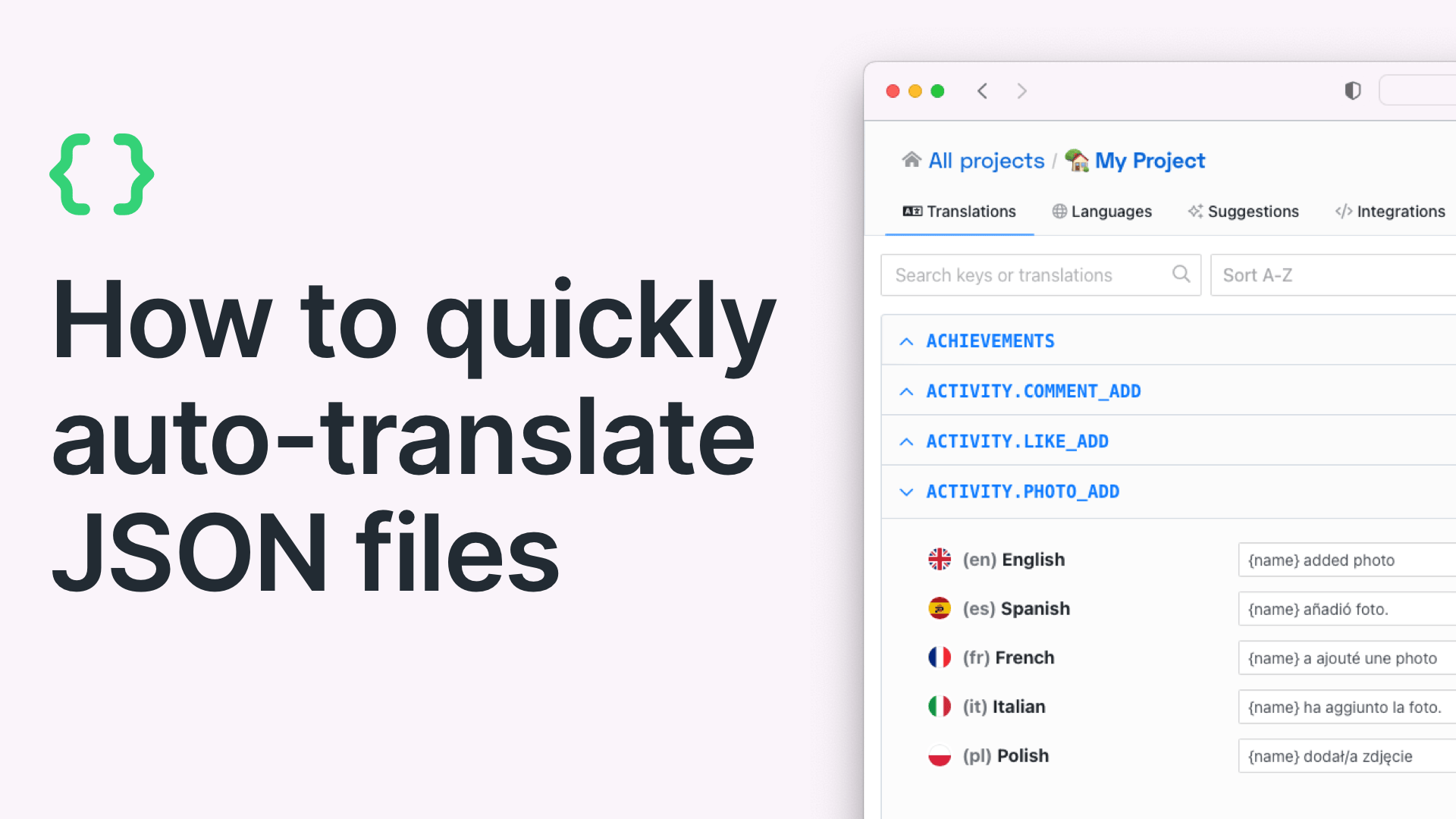

The SimpleLocalize editor puts AI translation controls right where you work: batch-translate missing keys, review suggestions side-by-side, or fix individual strings with one click.

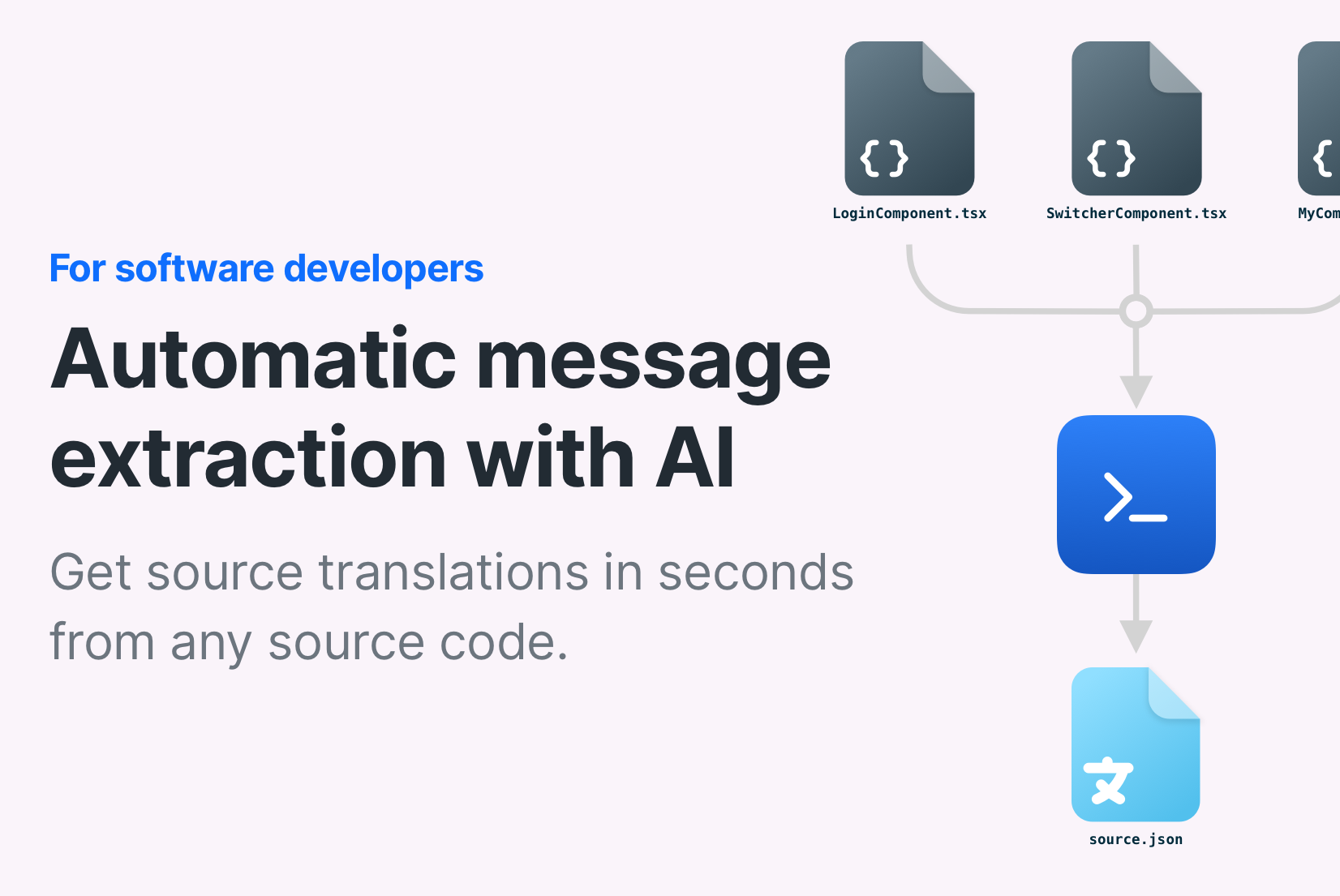

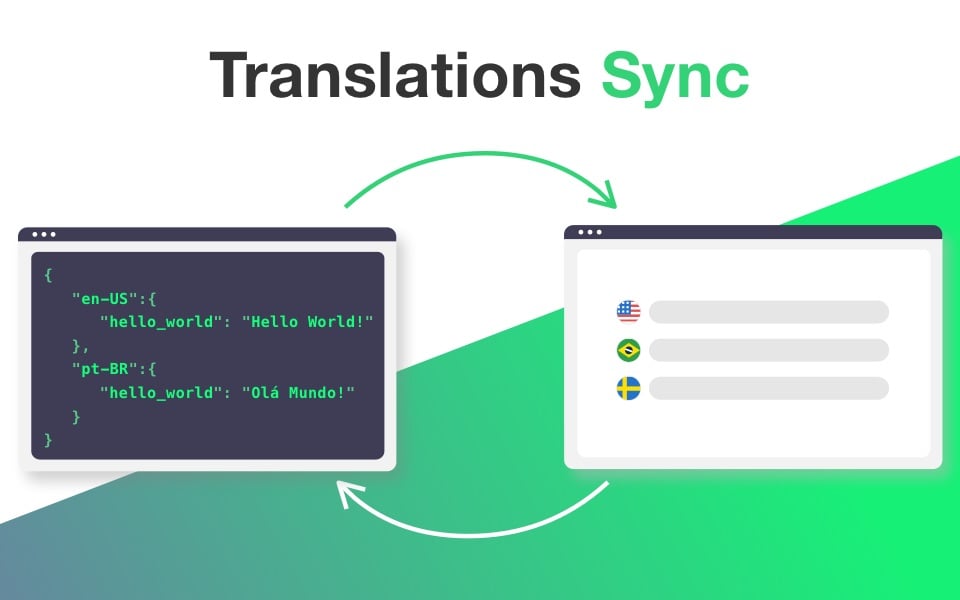

How it works

From source code to AI-translated app

A proven workflow to get your project translated with AI in minutes, not days.

Connect your codebase

Push translation keys from your repo using the CLI or CI/CD. SimpleLocalize auto-detects your file format and organizes keys by namespace.

Set project context

Describe your product, target audience, and tone. Add key-level descriptions and character limits where needed. This metadata feeds every AI request.

Choose your AI model

Pick OpenAI, Claude, Gemini, or any other model. Use DeepL or Google Translate as a fast baseline. Mix and match per language or task.

Translate & review

Run batch AI translation for missing keys. Review in the editor, approve or refine, then publish via CDN or export to your repo.

For a step-by-step walkthrough, see the getting started guide.

Ready to stop copy-pasting into ChatGPT?

Start a free project and AI-translate your first keys in minutes.

Start for freeWhy SimpleLocalize

Built for the way

AI localization actually works

Not just another "AI translate" button. A full pipeline from context to cache to delivery.

Start AI-powered localization today

- Gemini, Claude, OpenAI via OpenRouter

- Context-aware AI translations

- Smart caching — only translate changes

- MCP server for AI agent workflows

- Local AI & enterprise privacy options

"The product

and support

are fantastic."

"The support is

blazing fast,

thank you Jakub!"

"Interface that

makes any dev

feel at home!"

"Excellent app,

saves my time

and money"

What is AI-powered localization?

AI-powered localization uses large language models (LLMs) from OpenAI, Anthropic, Google, and others to translate software strings with contextual awareness. Unlike traditional machine translation (MT) that operates on isolated sentences, AI models can process metadata — project descriptions, key-level context, tone instructions, and character constraints — to produce translations that fit your product naturally.

SimpleLocalize bridges the gap between raw AI capability and production-ready localization by acting as a context layer and caching system between your codebase and the AI model. You define the context once, and every translation request inherits it automatically. Combined with smart caching that only re-translates changed keys, AI localization becomes both cost-effective and consistent.

How does the Context Engine improve AI translation quality?

The Context Engine sends structured metadata alongside every translation request. This includes the project description (e.g., “a fintech app for Gen Z”), key-level descriptions and screenshots, character limits, placeholder rules, and tone instructions (formal vs. informal).

This context prevents common AI translation errors — like translating “Home” as a building instead of a navigation button — without requiring you to write custom prompts for every string. Read more about how context improves AI translations.

What is OpenRouter and how does it work with SimpleLocalize?

OpenRouter is a unified API gateway that gives you access to dozens of AI models — Gemini, Claude, OpenAI, Llama, Mistral, and more — through a single endpoint. SimpleLocalize integrates with OpenRouter so you can switch between models without changing your workflow.

This means you can use fast, cheap models from Google for bulk translation of UI strings, and switch to Claude for nuanced marketing copy — all within the same project. You can also connect local models via Ollama for air-gapped, privacy-first translation workflows.

AI translations vs. machine translations (DeepL, Google Translate)

Machine translation (MT) services like DeepL and Google Translate use specialized neural networks trained specifically for translation. They are fast, predictable, and cost-effective for straightforward content. AI translations using LLMs offer deeper contextual understanding — they can follow tone instructions, respect character limits, and adapt to project-specific terminology.

In practice, the best approach is often hybrid: use MT as a fast baseline, then apply AI where context matters. SimpleLocalize supports both workflows — compare DeepL, Google Translate, and OpenAI to decide what fits your project.

What is MCP (Model Context Protocol) and how does it help with localization?

MCP (Model Context Protocol) is an open standard that allows AI agents to interact with external tools and data sources. SimpleLocalize provides an official MCP server that AI coding assistants — in Cursor, Windsurf, Claude Desktop, or any MCP-compatible client — can use to query, update, and translate localization data directly from the IDE.

This enables workflows where an AI agent can find missing translations, add new keys, and trigger context-aware AI translation without the developer switching tools. It's the bridge between AI-driven development and production-ready localization.

Frequently asked questions

Do I need a subscription to use AI translations?

No. AI translations are available to all users regardless of plan. You can provide your own OpenAI or OpenRouter API key and pay only for the tokens you consume. SimpleLocalize does not charge additional fees on top of the provider's pricing. For DeepL and Google Translate, a monthly character allowance is included in paid plans with unused characters rolling over.

Which AI models does SimpleLocalize support?

SimpleLocalize supports OpenAI models, and any model available through OpenRouter — including Gemini, Claude, Llama, Mistral, and dozens more. You can also connect local models via Ollama or any OpenAI-compatible endpoint. Additionally, DeepL and Google Translate are available as machine translation providers.

How does smart caching save money on AI translations?

SimpleLocalize tracks which keys have been translated and which have changed since the last run. When you trigger AI translation, only new or modified keys are sent to the model — the rest are served from the translation memory cache. For a project with 2,000 keys where only 10 changed, you pay for 10 translations instead of 2,000. Use our auto-translation cost calculator to estimate savings.

Can I use AI translations with local or private models?

Yes. SimpleLocalize supports any OpenAI-compatible API endpoint. You can run models locally using Ollama (e.g., Llama, Mistral, Gemma) and point SimpleLocalize to your local endpoint. Your translation data never leaves your infrastructure — ideal for teams with strict data-privacy or regulatory requirements.

What is the MCP server and how do I use it?

The SimpleLocalize MCP server allows AI coding assistants (Cursor, Windsurf, Claude Desktop) to interact with your localization data directly from the IDE. Install the MCP extension, connect it to your SimpleLocalize project, and your AI agent can query missing translations, push new keys, and trigger auto-translation without switching tools.